Governed AI vs Autonomous AI: Choosing the Right Customer Experience Approach

AI in customer experience software now does more than suggest replies or sort tickets. Some systems wait for a person to approve an action, while others can act on their own.

That difference changes how support teams handle refunds, account updates, escalations, and sensitive customer data. It also changes who is accountable when an AI action is incorrect.

In customer service, the question is not only whether AI is present. The question is how much authority the AI has inside the workflow.

This article explains the difference in simple terms. The goal is to separate two ideas that are often grouped together: governed AI and autonomous AI.

What governed and autonomous AI mean for customer experience?

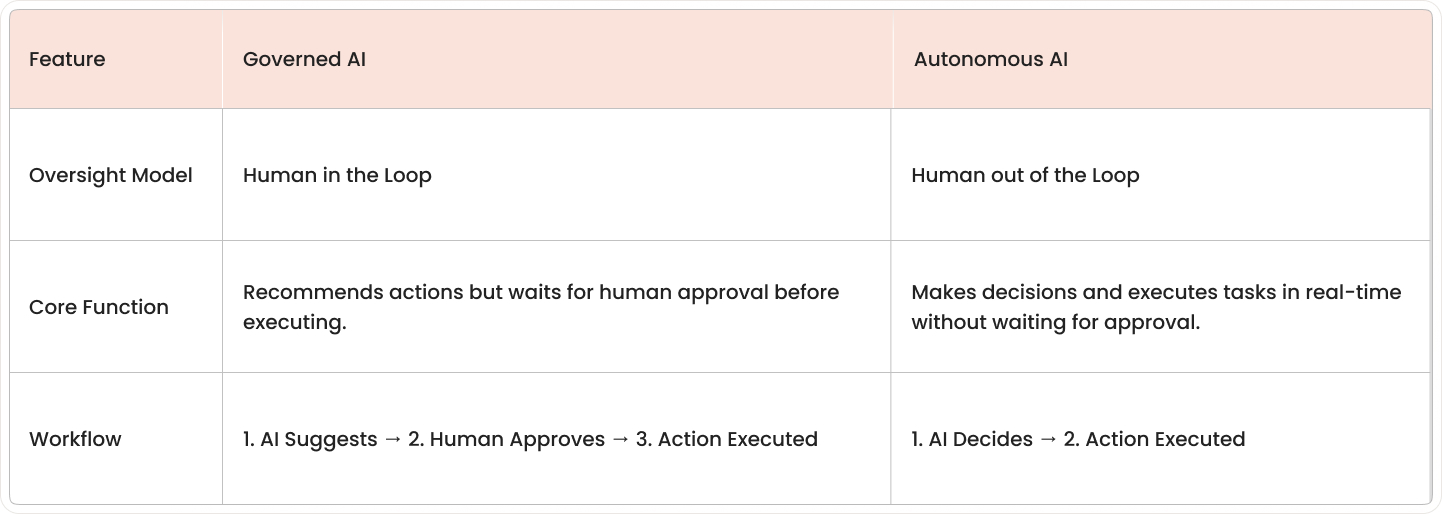

Governed AI and autonomous AI describe two different levels of AI decision-making inside customer support software. The main difference is whether a person approves the action before the system carries it out.

- Governed AI: AI that recommends actions but requires human approval before executing

- Autonomous AI: AI that learns and makes real-time decisions without human override

Governed AI works like a trained assistant. It can draft a reply, recommend a refund path, suggest a knowledge article, or identify the next best action. Still, a human agent or manager confirms the step before the system completes it.

Autonomous AI works more like an independent operator inside a defined process. It can classify conversations, respond to common questions, update records, trigger workflows, and, in some cases, resolve issues without waiting for a person.

Customer experience platforms often include both models simultaneously. One workflow may use autonomous AI to sort incoming tickets and governed AI to recommend a credit, escalate a complaint, or send a final response for approval.

How governed and autonomous AI differ in customer service

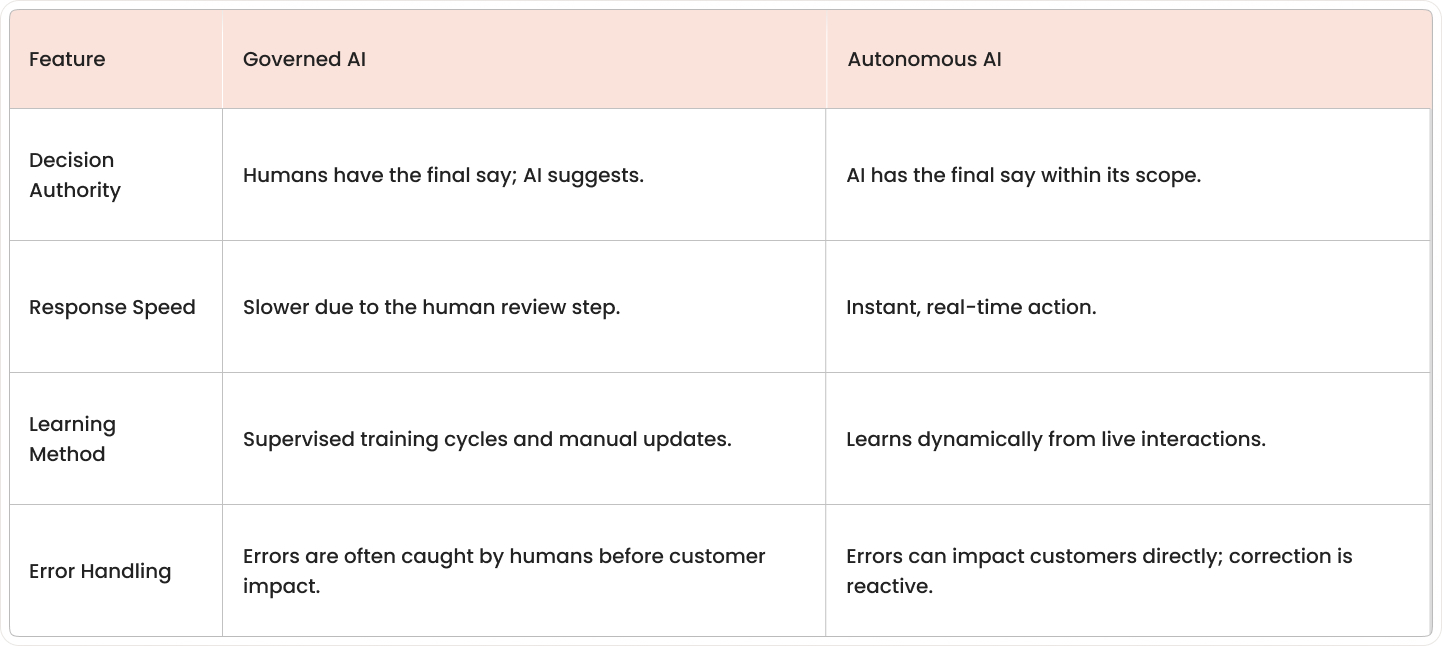

The main difference appears in how customer service software handles judgment, speed, learning, and mistakes. CX leaders usually compare both models by asking who approves actions, how fast responses happen, how the system improves, and what happens when something goes wrong.

Decision-making authority and human oversight

Decision-making authority means who has the final say before an action reaches a customer or changes a record. In a governed setup, AI recommends a step, and a person approves it; in an autonomous setup, the AI completes the step on its own.

A ticket routing example makes the difference clear. Governed AI may suggest sending a billing complaint to a retention team and waiting for an agent or supervisor to confirm, while autonomous AI sends the ticket immediately based on its own rules and learned patterns.

Real-time response speed and adaptability

Response speed is one of the clearest differences between the two models. Autonomous AI reacts in real time because it does not pause for approval, while governed AI includes a review step that adds time.

Speed matters most in high-volume, repeatable interactions such as order status questions, password resets, or basic account checks. Oversight matters more when a reply could affect money, privacy, compliance, or customer trust.

Learning behaviors and continuous improvement

Learning behavior describes how the AI changes after more customer interactions. Autonomous AI often updates its behavior dynamically by using feedback, outcomes, and new conversation patterns as they happen.

Governed AI usually improves through supervised training cycles. Teams review outputs, adjust rules, retrain models, and approve changes before updated behavior appears in production.

When autonomous AI creates risk in customer experience

Autonomous AI can save time, but it can also create risk when it acts without enough oversight. The risk becomes larger when the system handles unusual requests, regulated data, public-facing responses, or tools that were never approved.

Unpredictable responses to edge cases

Edge cases are customer situations that fall outside normal patterns. These cases include mixed billing disputes, unusual refund histories, conflicting account details, or messages that combine several problems in one conversation.

Autonomous AI works best when the request matches patterns it has seen before. When the request is unusual or unclear, the system may guess the intent and produce an answer that does not fit the situation.

Brand reputation and customer trust

Customer trust can drop quickly after one visible AI mistake. A single reply that sounds careless, inaccurate, biased, or inappropriate can shape how a customer views the entire company.

These moments are often described as "AI gone rogue" moments. The phrase refers to cases where the AI acts in a way that looks uncontrolled, even if the system was following internal logic.

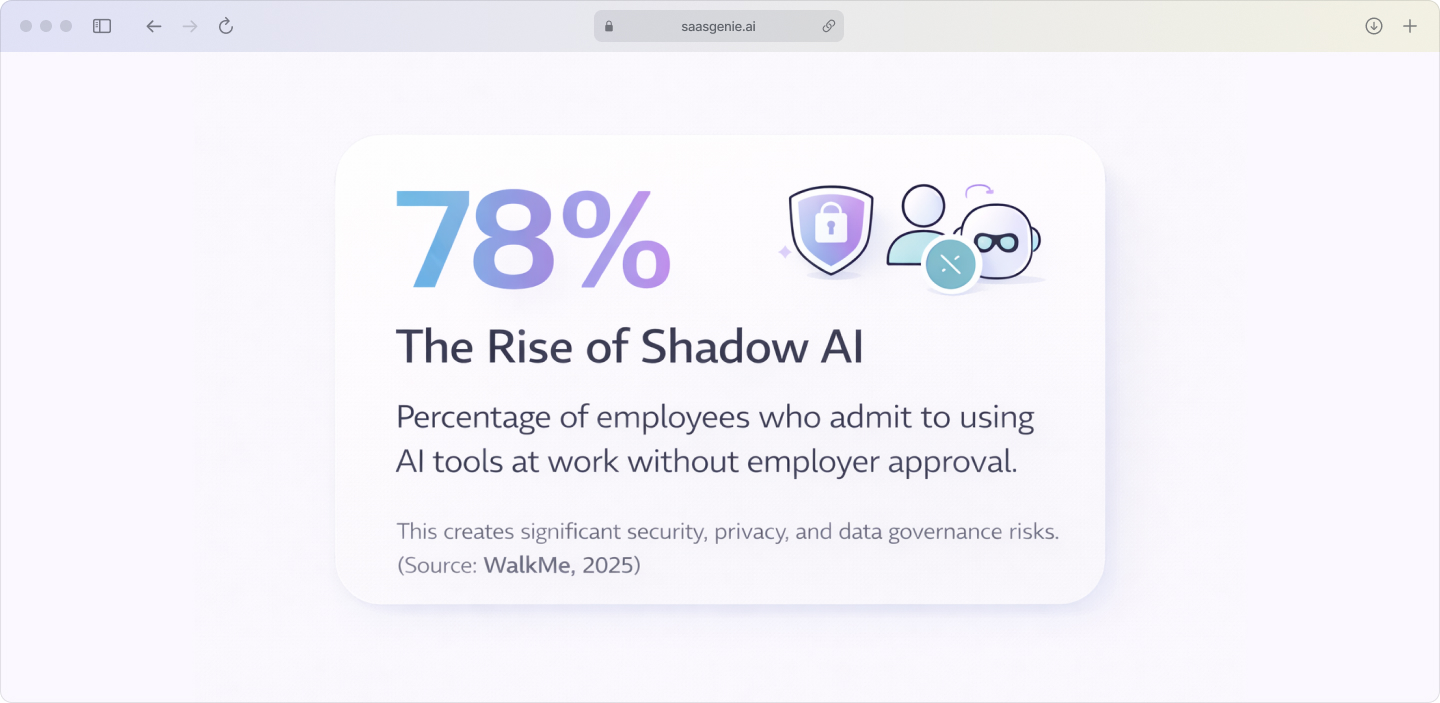

Shadow AI and unauthorized tool adoption

Shadow AI refers to employees using AI tools that were not approved by the organization. According to WalkMe's 2025 survey, 78% of employees admit to using AI tools that were not approved by their employer. This can happen when teams copy customer messages into public AI apps, use unreviewed browser extensions, or connect outside bots to support workflows.

Shadow AI creates risk because it sits outside formal security, privacy, and workflow controls. Customer data may be shared with unknown systems, stored in the wrong place, or processed without proper review. Gartner projects that over 40% of AI-related data breaches by 2027 will stem from unapproved or improper generative AI use.

Why AI governance is architecture, not just policy

AI governance in customer service is often described as a set of rules. In practice, governance also depends on how the customer experience platform is built.

A written policy can say that AI responses require review, that refunds above a limit require approval, or that sensitive cases go to a human agent. If the software cannot enforce those steps, the policy remains separate from daily work.

- Policy alone fails: Written rules without technical controls get ignored.

- Architecture enforces: Built-in guardrails ensure consistent AI behavior.

- Visibility matters: You need audit logs and dashboards to track AI decisions.

Workflow automation is part of governance when it controls the path a case follows in AI service management. A complaint about a refund, for example, can be routed into a queue where AI drafts a response, a supervisor reviews the draft, and the final message is sent only after approval.

Which customer experience platforms include AI governance controls

AI governance controls vary by platform. As of 2026, the main differences are where human review happens, how admin teams set limits, and how clearly the platform records AI actions.

Freshworks Freddy AI governance features

Freshworks includes governance controls across customer service and service management workflows through Freddy AI, role permissions, workflow automation, and admin settings. The platform can keep AI in an assistive role, where it suggests replies, summaries, field values, or next steps while agents or supervisors decide what gets sent or approved.

Human review can be placed inside customer-facing flows in several ways:

- Topic-based routing: Conversations move to agents based on subject matter.

- Confidence thresholds: Low-confidence AI responses trigger human review.

- Workflow approvals: Actions like credits or escalations require manager approval.

- Business hours controls: After-hours requests route to human queues.

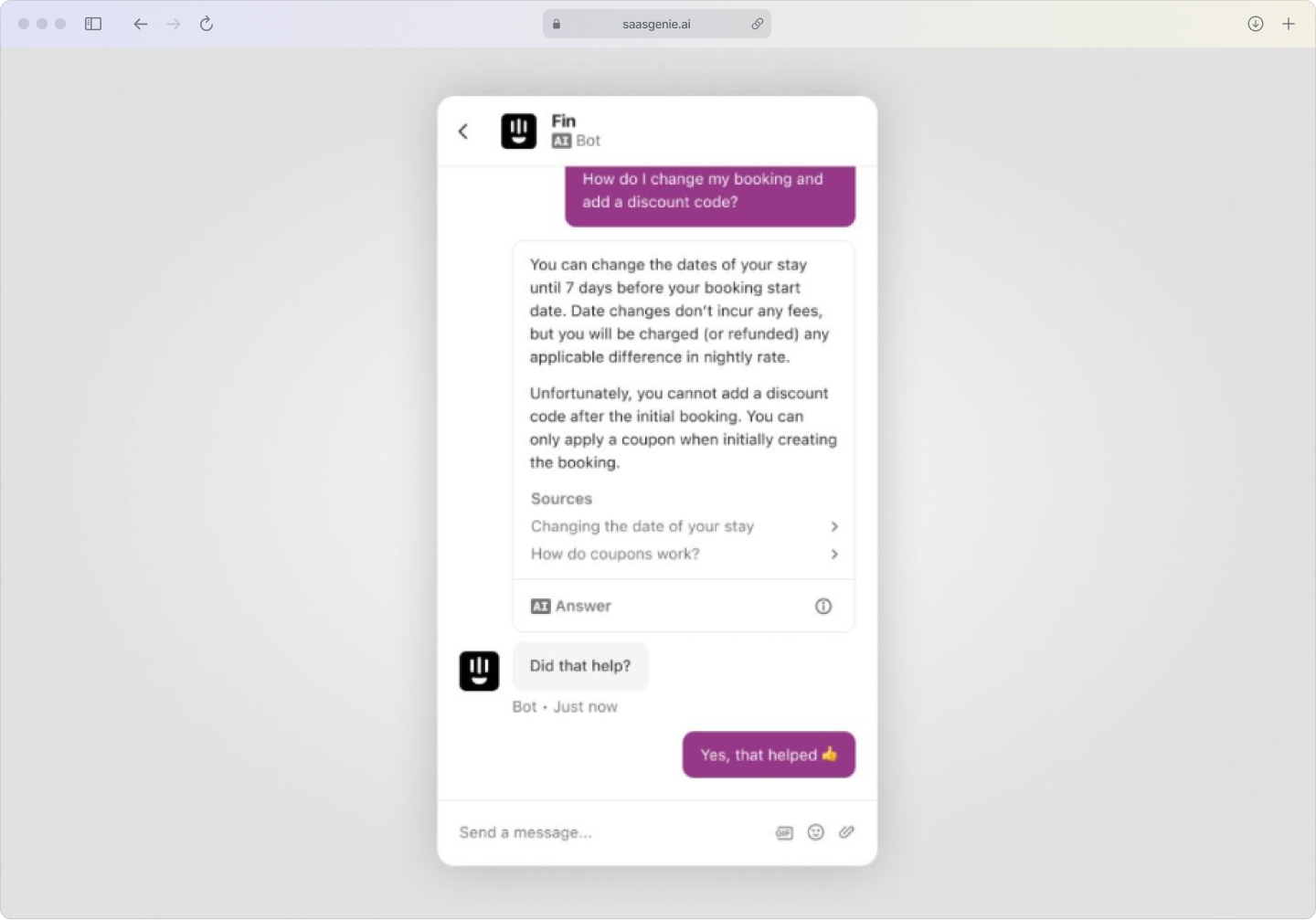

Intercom Fin AI control and oversight settings

Intercom Fin is built for conversational support, so governance appears mainly in answer control, handoff design, and conversation monitoring. Teams can define what content Fin uses to answer questions, which gives some control over the information source behind customer replies.

Handoff triggers are a key oversight tool in Fin. A conversation can move to a human agent when the request is outside supported topics, when customer intent is unclear, or when the discussion becomes sensitive or complex.

How enterprise platforms compare on AI oversight

Larger enterprise platforms often offer broader AI oversight frameworks within their ITSM governance structures. Those frameworks may include more advanced audit controls, policy management, model governance, segregation of duties, and compliance tooling across large environments.

- Freshworks: Strong out-of-box governance for mid-market teams.

- Intercom: Flexible controls with easy configuration.

- Atlassian: Growing governance features with recent AI updates.

- Enterprise platforms: More robust but require longer implementation.

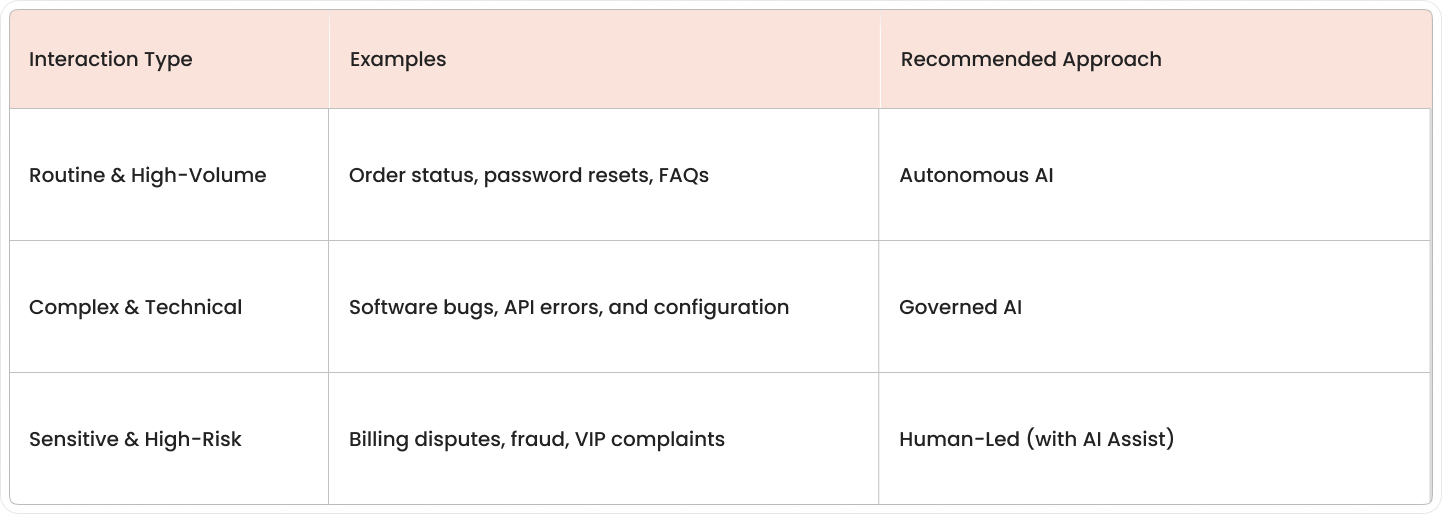

How to match AI approaches to your customer interactions

Different customer interactions call for different levels of AI control. The best fit depends on how complex the request is, how much risk is involved, and whether a human judgment call is part of the process.

High-volume routine service requests

Autonomous AI fits routine requests that follow a clear pattern and have a small chance of causing harm:

- Password resets and account unlocks

- Order status and shipping updates

- Basic FAQ responses and product information

- Appointment confirmations and reminders

Complex technical support scenarios

Governed AI fits technical support cases that involve diagnosis, multiple possible causes, or a chain of system checks. Examples include software failures, API errors, product configuration issues, and problems that affect several users or systems at once.

In these situations, the AI can help by summarizing the issue, pulling related knowledge, or suggesting the next troubleshooting step. A human agent then reviews the recommendation and decides what to test, send, or escalate.

Sensitive customer situations and complaints

Human involvement fits conversations where emotion, judgment, or relationship management matters more than speed:

- Refund disputes and billing complaints

- Cancellation requests and retention scenarios

- Fraud concerns and security issues

- VIP customer handling and escalations

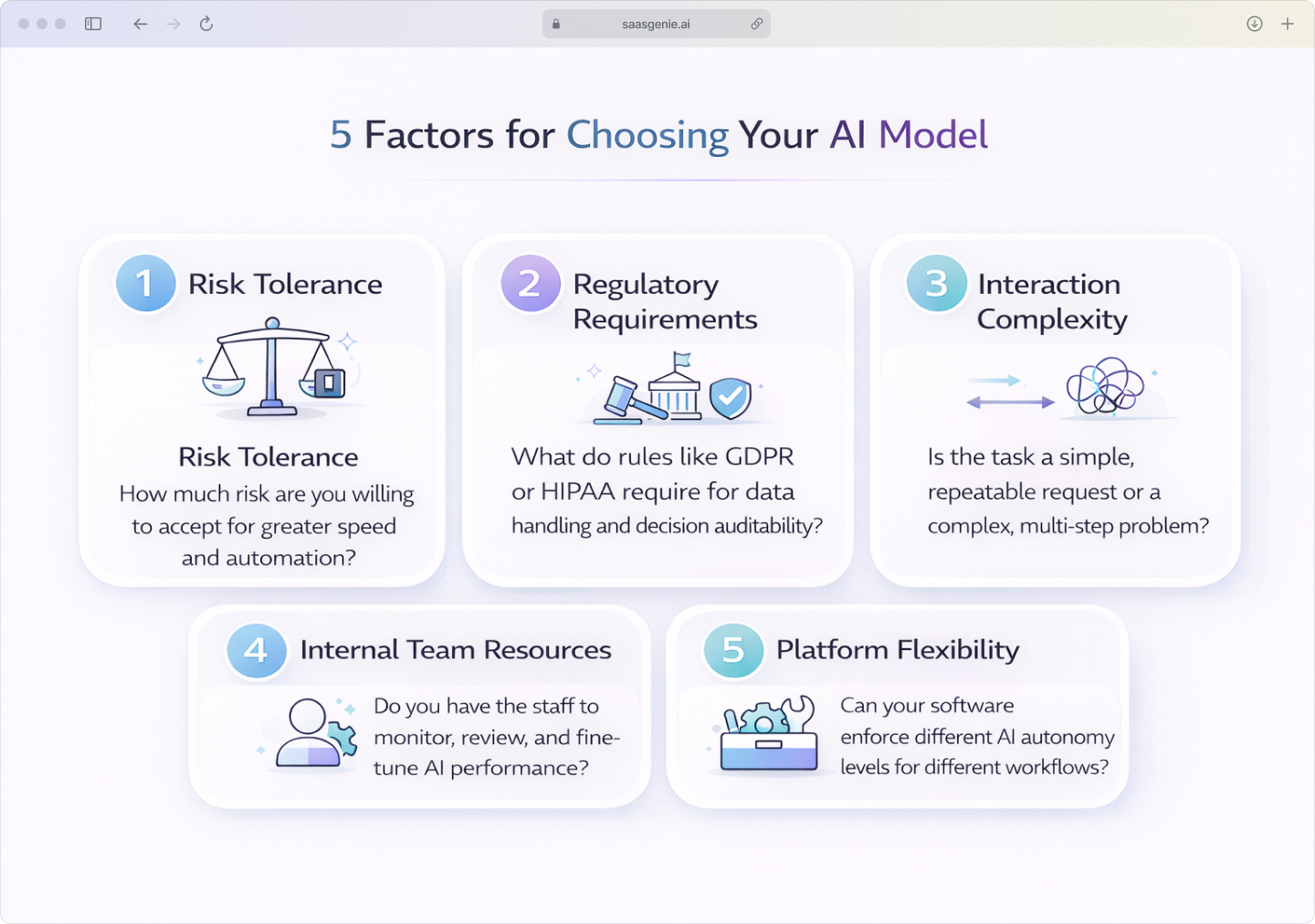

Five decision factors for choosing your AI customer service model

A customer service team can compare governed AI and autonomous AI by looking at five practical factors. Each factor changes how much control the AI has, how much oversight people keep, and how much risk the system carries.

1. Your organization's risk tolerance

Risk tolerance means how much uncertainty leadership accepts when a system acts on its own. Some organizations accept a higher chance of mistakes in exchange for faster automation, while others place more weight on control and review.

A conservative organization often prefers governed AI for customer-facing actions such as refunds, policy exceptions, and account changes. A growth-focused organization may allow more autonomous AI in routine workflows if the financial and reputational impact of an error is limited.

2. Industry regulatory and compliance requirements

Regulations affect how much human oversight is allowed or expected in an AI workflow. In some industries, the main issue is not whether AI is used, but whether the organization can explain the decision, track the action, and show who approved it.

HIPAA affects how protected health information is handled in healthcare settings. GDPR affects personal data processing, automated decision-making, and transparency in many regions, while financial services rules often focus on recordkeeping, disclosures, and controlled decision paths.

3. Customer interaction complexity

Interaction complexity changes how much autonomy fits the task. A simple request usually has one clear intent and one clear answer, while a complex request may involve multiple issues, unclear wording, or policy judgment.

Autonomous AI fits interactions such as shipment updates, password resets, appointment reminders, and basic product questions. Governed AI fits troubleshooting, billing disputes, contract questions, and service failures that depend on context across several systems or past interactions.

4. Internal AI expertise and team resources

AI oversight depends on the people managing the system, not only the software itself. According to Deloitte's 2026 report, only one in five companies has a mature model for governance of autonomous AI agents. A team with analysts, admins, compliance reviewers, and strong support operations can often manage more autonomous workflows than a team with limited monitoring capacity.

Autonomous AI works best when staff can review outputs, tune workflows, track failure patterns, and respond when behavior changes. Governed AI fits teams that want AI support inside clearer guardrails because review is built into the process rather than handled mostly after deployment.

5. Platform governance tool flexibility

Platform flexibility refers to how well the software lets a team set limits on AI actions. The main question is whether the platform can match the organization's actual rules for approvals, routing, permissions, logging, and escalation.

Some platforms allow different autonomy levels by queue, channel, issue type, or customer segment. That setup makes it possible to run autonomous AI for low-risk requests and governed AI for high-risk interactions inside the same environment.

How to roll out a governed AI strategy

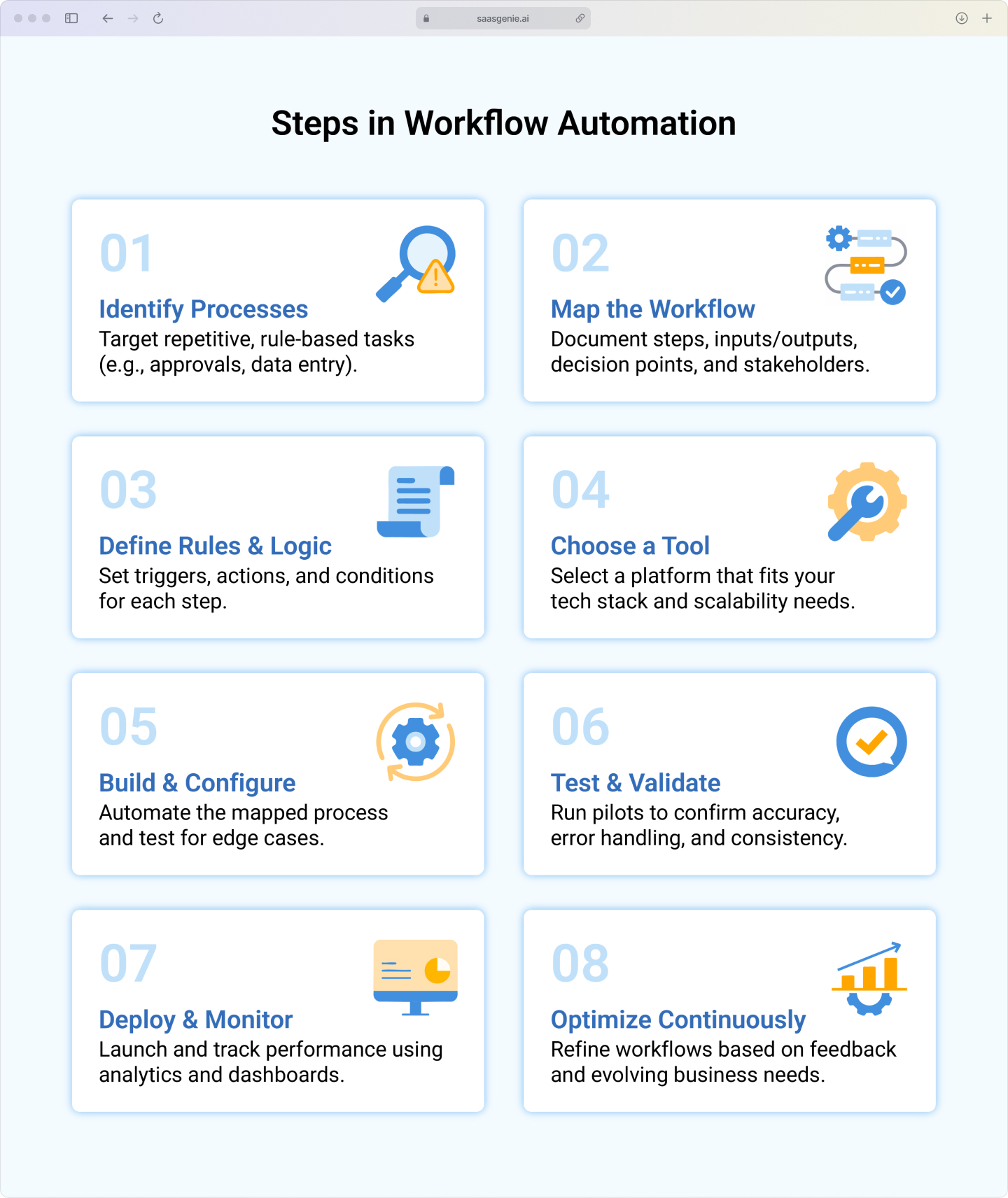

A practical CX AI rollout usually begins with a clear map of customer interactions. The map groups requests by risk, complexity, volume, and data sensitivity so each workflow can be matched to the right level of AI control.

The next step is often a workflow review. Teams document where AI can draft, classify, summarize, route, or resolve, and where a human review point stays in place.

A limited pilot is common in the first phase. Many teams begin with one low-risk use case, such as FAQ handling or intake triage, and then measure accuracy, escalation patterns, exception rates, and agent override activity.

Platform fit also affects the rollout path. The review usually focuses on whether the system can support different autonomy levels across channels, queues, customer segments, and case types without creating separate manual controls outside the platform.

saasgenie has experience helping organizations implement AI-first CX solutions with governance built into workflows from day one. That work typically covers platform selection, process design, automation setup, admin controls, and ongoing optimization across Freshworks and Intercom environments.